AI · Reinforcement Learning · Python

Project Lambda

AI Agents Playing Counter-Strike Using Only Visual Input

Overview

Project Lambda builds two AI agents that play Counter-Strike: Global Offensive using only visual input from the screen. Unlike traditional aim-bots that read game memory to get player coordinates, these agents perceive and react to the game exactly as a human would — through pixels alone.

The project compares two fundamentally different AI approaches: classical computer vision with graph-based pathfinding, versus a deep neural network trained end-to-end on professional gameplay footage using behavioural cloning and offline reinforcement learning.

Agents

Agent 1

Vision + Pathfinding

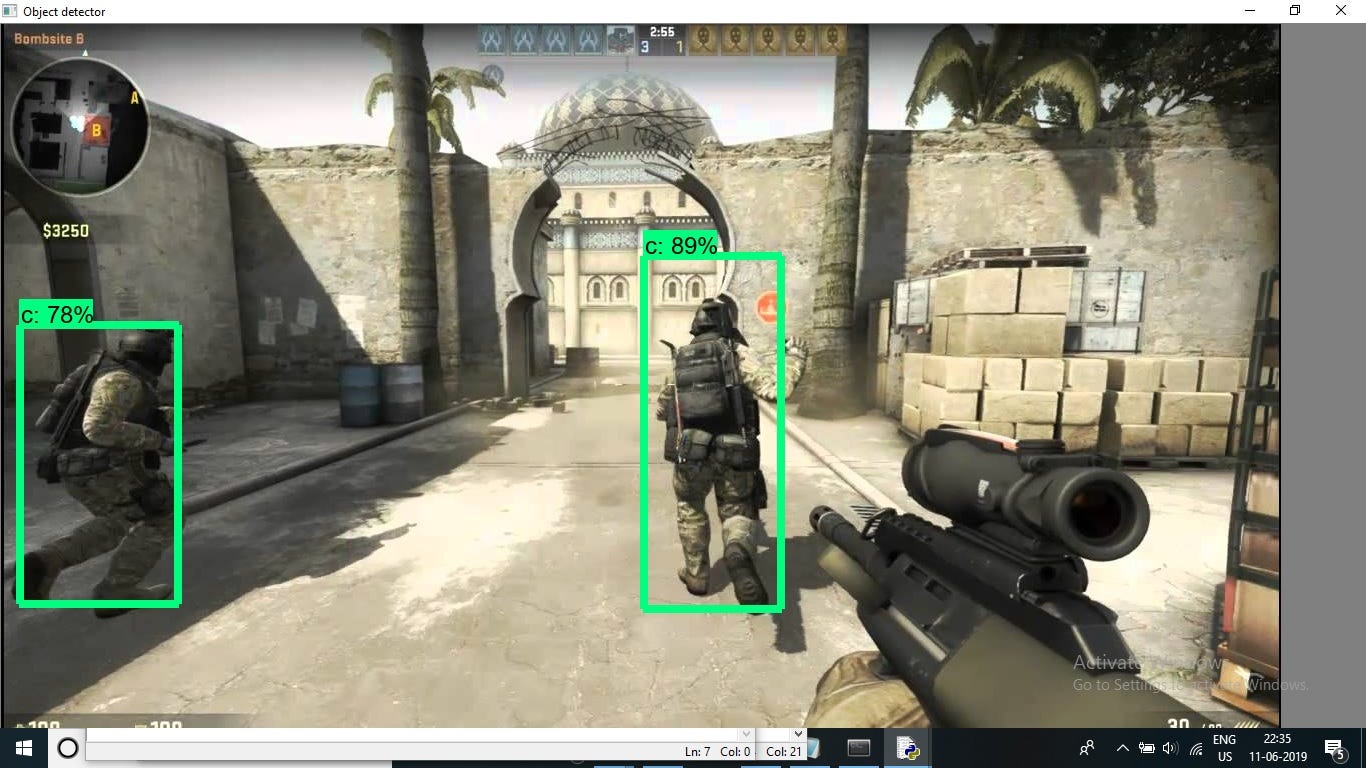

Combines YOLOv7 object detection with A* pathfinding. Detects players and game objects from raw screen pixels, then navigates the map using classical graph search.

Agent 2

Behavioural Cloning

A deep neural network trained on professional CS:GO match footage using behavioural cloning and offline reinforcement learning. Operates purely on visual input — no game memory access.